Prerequisites

These are the prerequisites to setting up a full private LTE Magma deployment. Additional prerequisites for developers can be found in the developer's guide.

Development Tools

Install the following tools:

- Docker and Docker Compose

- Homebrew only for MacOS users

- VirtualBox

- Vagrant

Replace brew with your OS-appropriate package manager as necessary:

brew install python3

pip3 install ansible fabric3 jsonpickle requests PyYAML

vagrant plugin install vagrant-vbguest

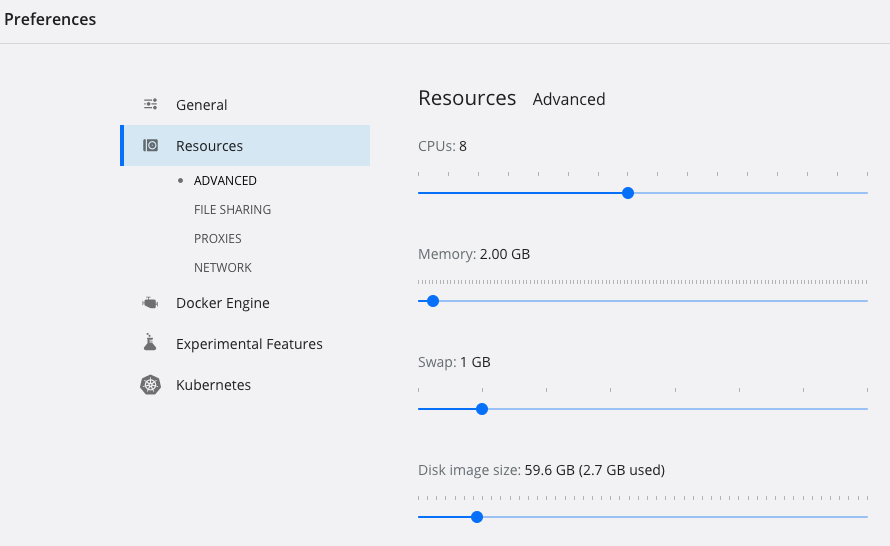

If you are on MacOS, you should start Docker for Mac and increase the memory allocation for the Docker engine to at least 4GB (Preferences -> Advanced).

Build/Deploy Tooling

We support building the AGW and Orchestrator on MacOS and Linux host operating systems. Doing so on a Windows environment should be possible but has not been tested. You may prefer to use a Linux virtual machine if you are on a Windows host.

First, follow the previous section on developer tools. Then, install some

additional prerequisite tools (replace brew with your OS-appropriate package

manager as necessary):

$ brew install aws-iam-authenticator kubernetes-cli kubernetes-helm python3 terraform

$ pip3 install awscli

$ aws configure

Provide the access key ID and secret key for an administrator user in AWS

(don't use the root user) when prompted by aws configure. Skip this step if

you will use something else for managing AWS credentials.

Production Hardware

Access Gateways

Access gateways (AGWs) can be deployed on to any AMD64 architecture machine which can support a Debian Linux installation. The basic system requirements for the AGW production hardware are:

- 2+ physical ethernet interfaces

- AMD64 dual-core processor around 2GHz clock speed or faster

- 2GB RAM

- 128GB-256GB SSD storage

In addition, in order to build the AGW, you should have on hand:

- A USB stick with 2GB+ capacity to load a Debian Stretch ISO

- Peripherals (keyboard, screen) for your production AGW box for use during provisioning

RAN Equipment

We currently have tested with the following EnodeB's:

- Baicells Nova 233 TDD Outdoor

- Baicells Nova 243 TDD Outdoor

- Assorted Baicells indoor units (for lab deployments)

Support for other RAN hardware can be implemented inside the enodebd service

on the AGW, but we recommend starting with one of these EnodeBs.

Orchestrator and NMS

Orchestrator deployment depends on the following components:

- An AWS account

- A Docker image repository (e.g. Docker Hub, JFrog)

- A registered domain for Orchestrator endpoints

We recommend deploying the Orchestrator cloud component of magma into AWS. Our open-source Terraform scripts target an AWS deployment environment, but if you are familiar with devops and are willing to roll your own, Orchestrator can run on any public/private cloud with a Kubernetes cluster available to use. The deployment documentation will assume an AWS deployment environment - if this is your first time using or deploying Orchestrator, we recommend that you follow this guide before attempting to deploy it elsewhere.

You will also need a Docker image repository available to publish the various Orchestrator NMS containers to. We recommend using a private repository for this.